I posted a post-Evidence Live blog last week which explored the notion of harms associated with doing rapid reviews (RRs). There is overlap from that post but I’ve had time to reflect and hopefully this will be better written. I’ve also added a vote!! It may need re-writing again, if you think it needs clarification then please let me know!

The question I was asked was about the harm of potentially getting a RR wrong. In other words, if the RR said ‘drug A’ was effective and a systematic review (SR) said it was ineffective – there’s your harm – patients receiving ineffective treatments. The degree of harm would vary depending on the seriousness of the condition being treated. For treatment for a graze the harm would be minimal but for cancer it could be catastrophic.

I believe the meaning behind the question was to suggest that RRs are inferior and therefore only a SR will do – defending the position based on reduction in harm.

As posted previously, the counter-balance to this is the opportunity cost. Say you do 1 SR for the same cost as 5 RRs that means that the reduction in harm in the area covered by the single SR should be greater than the reduction in harm in the five areas of the RRs – otherwise the SR does more harm than the RRs. So, it’s:

Reduction in harm due to a SR PLUS the harm associated with completely unsynthesised care in 4 areas VERSUS reduction in harm in five areas via RRs.

I have yet to see any evidence that reasonable RRs cause a reversal in advice. Most published studies show very similar results while – in a soon to be published study I’m involved in – the worst that happened was either a change in significance (from significance to non-significance or vice versa) and sometimes a loss of data (where the RR would report no evidence, as opposed to the wrong answer).

So, the poll:

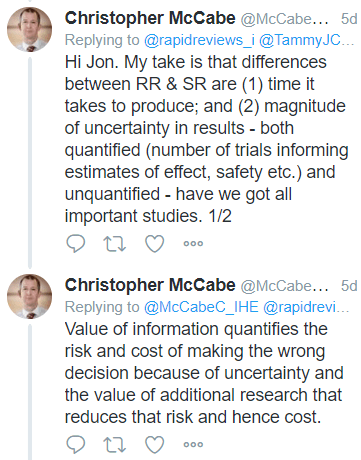

Thankfully, the answer can be decided by more than a poll – hopefully – via Value of Information, which is something I hope to explore with Christopher McCabe:

Please vote, comment, make suggestions to improve the post or the argument (one way or the other)!

Are SRs more likely to be accurate (right) because they take longer? I think the longer the time and the larger numbers of people (unless they are patients) involved in a systematic review and meta-analysis makes them more not less likely to be wrong – harmfully wrong. Or at best irrelevant and out of date. The most useful “systematic” (as in takes forever) review includes a forensic examination of all the studies in an area of research which, as is usually the case, makes it impossible to answer the research question with any degree of certainly. Assuming most evidence is weak, likely to be biased, or missing, a systematic review should include as part of its output a detailed plan or protocol for a well-designed study which will answer the question. This plan should include patients in the design to ensure the question is and outcomes are relevant. If patients and the public are meaningfully involved in producing a systematic review, then they are already there to help design a good primary study.

Lack of equipoise in authors of systematic reviews can be a very big problem. Reviewers may want to confirm that their favoured treatment/s are effective, or at least not harmful. Imagine the lengths review authors will go to obscure the possible harm an intervention they have been promoting for years has done, and may still be doing. You have the bias caused by selective and non-reporting of studies with null results. Outcome switching, goalpost moving. Salami slicing practices cause confusion and bias too – eg. reporting less flattering outcomes much later in a different journal . Lack of access to raw data. I could go on.

Rapid reviews are of course prone to the same biases, but they take a fraction of the time. However, they can’t be used to inform future research at least in terms of design etc. Both types of review are necessary, but if SRs continue to “compete” with RR by boasting greater accuracy rather than turning to the more constructive mission of working with patients and the public to improve primary research and reducing waste, they will become an anachronism.

LikeLike

In an ideal world, the conclusions from an SR and five RRs would essentially point to the same conclusion. Even there, the advantage of RRs is the time. An SR, correctly done, takes longer than an RR. I’d trust five RRs as opposed to a single large SR.

LikeLike